Exam AI — IGCSE exam-style practice platform

Authentic exam-style questions and mark-scheme aligned feedback to build exam readiness.

Overview

Exam AI is an exam-practice platform built to help students prepare for IGCSE through authentic, exam-style questions and high-quality feedback. The product focus is exam readiness—replicating the structure, difficulty, and expectations of real papers—so learners build confidence and accuracy under timed conditions.

The challenge

Students often spend a lot of revision time on passive study or generic quizzes that don't reflect real exam demands. Common gaps include:

- Limited exposure to true exam-style prompts and the nuance of mark schemes

- Feedback that says what is wrong, but not why it's wrong or how to improve

- Difficulty identifying weaknesses across topics and tracking progress over time

- Lack of structured practice that builds exam technique, not just knowledge recall

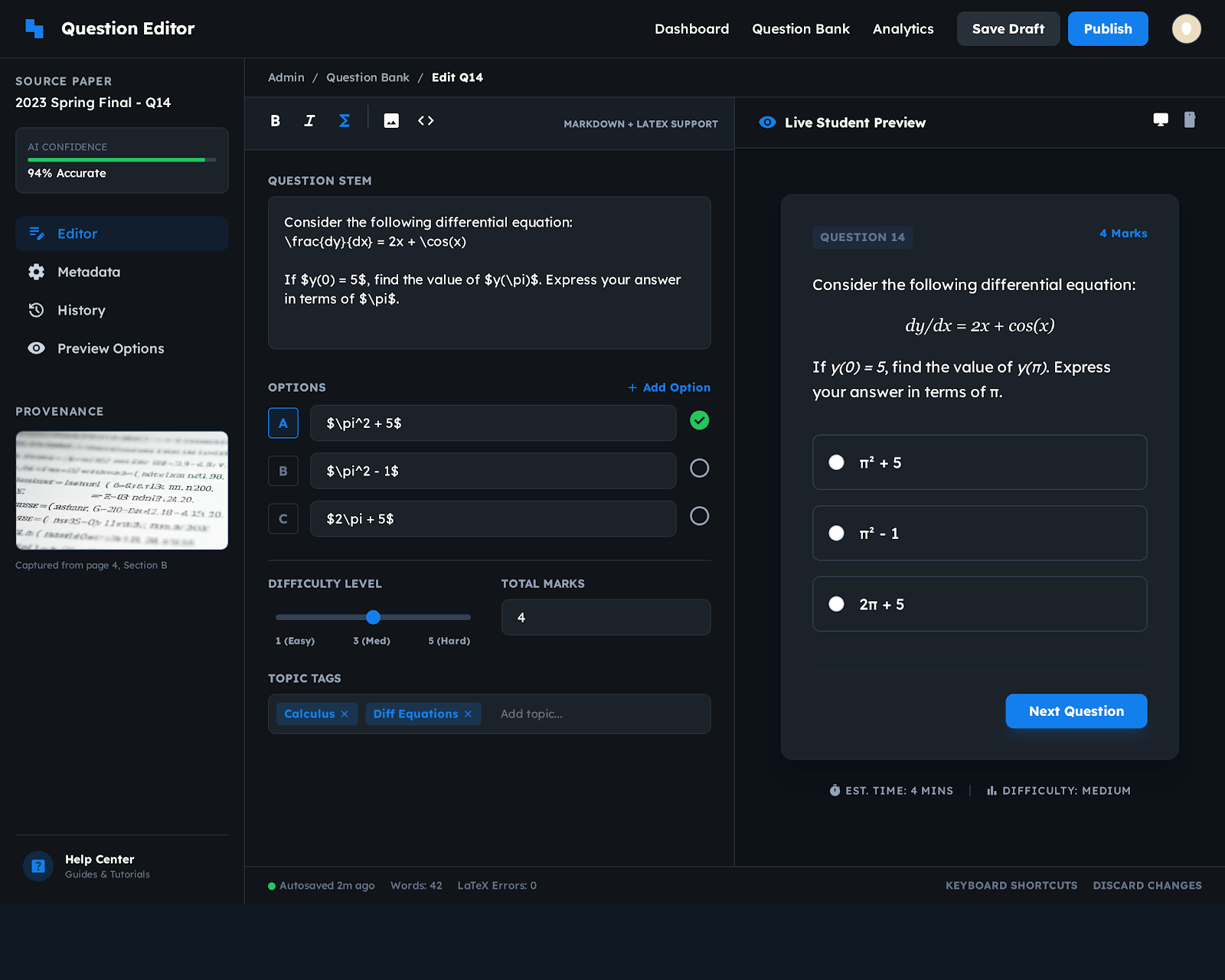

What we built

The platform provides a structured practice experience that prioritizes realistic assessment:

- Exam-style question practice across subjects and topics

- Mark-scheme aligned feedback to help students understand how marks are awarded

- Progressive difficulty and targeted practice loops to reinforce weak areas

- Guided improvement that focuses on technique (interpreting prompts, structuring responses, showing working)

Learning design (what makes it effective)

- Technique-first feedback: guidance on approach, not just correctness

- Targeted reinforcement: repeated practice on the same concept in varied forms

- Clear progression: learners can move from fundamentals to exam-level difficulty with visibility into readiness

- Consistency: feedback is structured so students can internalize patterns and apply them in real exams

AI workflow orchestration

To keep evaluation and feedback consistent and debuggable, AI steps are orchestrated as explicit workflows:

- Question generation: generating practice questions with controllable difficulty and coverage

- Answer evaluation: assessing responses in a way that is aligned with exam expectations

- Feedback sequencing: delivering feedback in a structured order (concept → method → exam technique → next steps)

LangGraph is used to coordinate these steps so outcomes are traceable and behavior remains consistent as the system evolves.

Technical approach

The system combines real-time web interaction with heavier AI processing:

- Backend/services: Node.js for application workflows and APIs

- AI services: Python for advanced processing and model integrations

- Orchestration: LangGraph for multi-step evaluation and feedback pipelines

Outcome

Exam AI helps students shift from rote revision to active, exam-focused practice by combining realistic questions with structured, mark-scheme-aware feedback—supporting both subject mastery and stronger exam performance through deliberate practice.

Want to build something like this?

We build AI-powered learning and assessment platforms with orchestrated workflows.